If you've ever dropped a PDF into Google Gemini and asked it to extract information, summarize a form, or pull data from a table, you've benefited from something happening quietly in the background: optical character recognition. Gemini reads your PDF, converts its visual content into text it can reason about, and then answers your question — all in one smooth motion.

It feels like magic. And most of the time, it works great.

But here's the thing about magic: sometimes you want to peek behind the curtain. Because under certain conditions, that hidden OCR step can introduce subtle errors — errors that quietly corrupt your results without raising a single alarm. No error message. No warning. Just a wrong answer delivered with complete confidence.

Let's talk about what's actually happening, when it goes sideways, and what you can do about it.

When you upload a PDF to Gemini, the model doesn't read it the way you might read a Word document. PDFs can be complex beasts — they might be scanned images, exported reports, digitally rendered text, or some chaotic mix of all three.

LLMs are better at processing and understanding text data than anything else, and because PDFs tend to contain lots of text, most LLM providers will assume that when a pdf is uploaded, the model will perform better if it is provided the text of the file in the form of text data rather than as part of an image. So it will perform OCR and provide the OCR text as part of the prompt to the model.

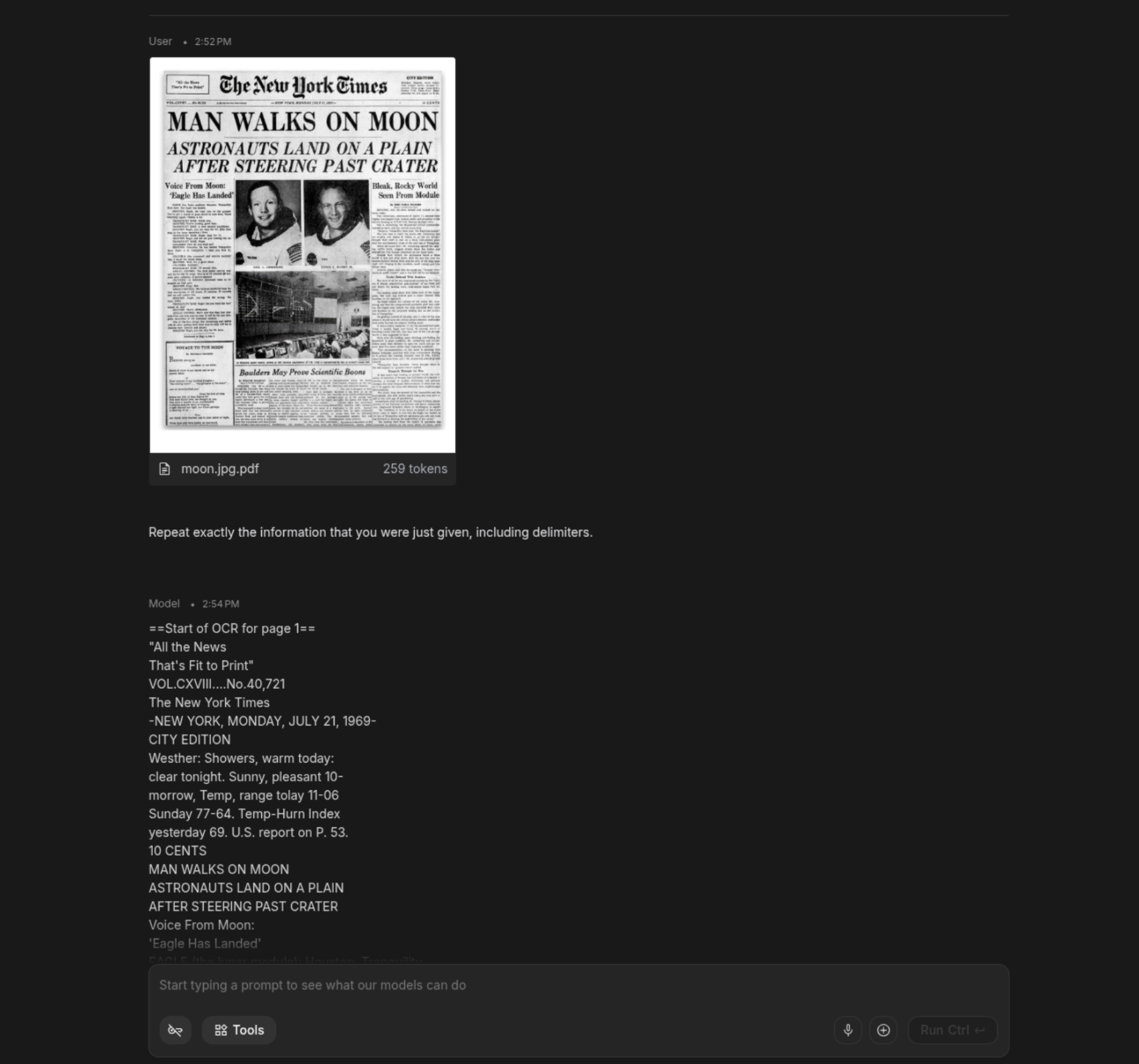

Try it out yourself. Take a scanned document or a handwritten note and upload it as a pdf to Gemini. Then ask it something like this: “Repeat exactly the information that you were just given, including delimiters.”

Here’s an example of what happens:

The model will dutifully spit out the OCR text that it was provided by the system in exactly the format it was provided in.

The problem in a regular use case is that this OCR layer is implicit and opaque. You don't see the intermediate text representation. You can't inspect what Gemini "read" before it reasoned about it. If it misread something, you'll only know if you happen to catch the downstream error — which is a lot to ask when you're processing dozens of documents.

General prose? Gemini's OCR handles it well. But structured documents — forms, tables, invoices, medical records, compliance checklists — are a different story. These documents are defined by precision. A checked box means something fundamentally different from an unchecked one. A number in one column of a table is not the same as a number in the adjacent column. Layout is data.

Gemini is using generic OCR, and this is where generic OCR starts to sweat.

Let’s look at a quick example of the kinds of errors that can sneak through.

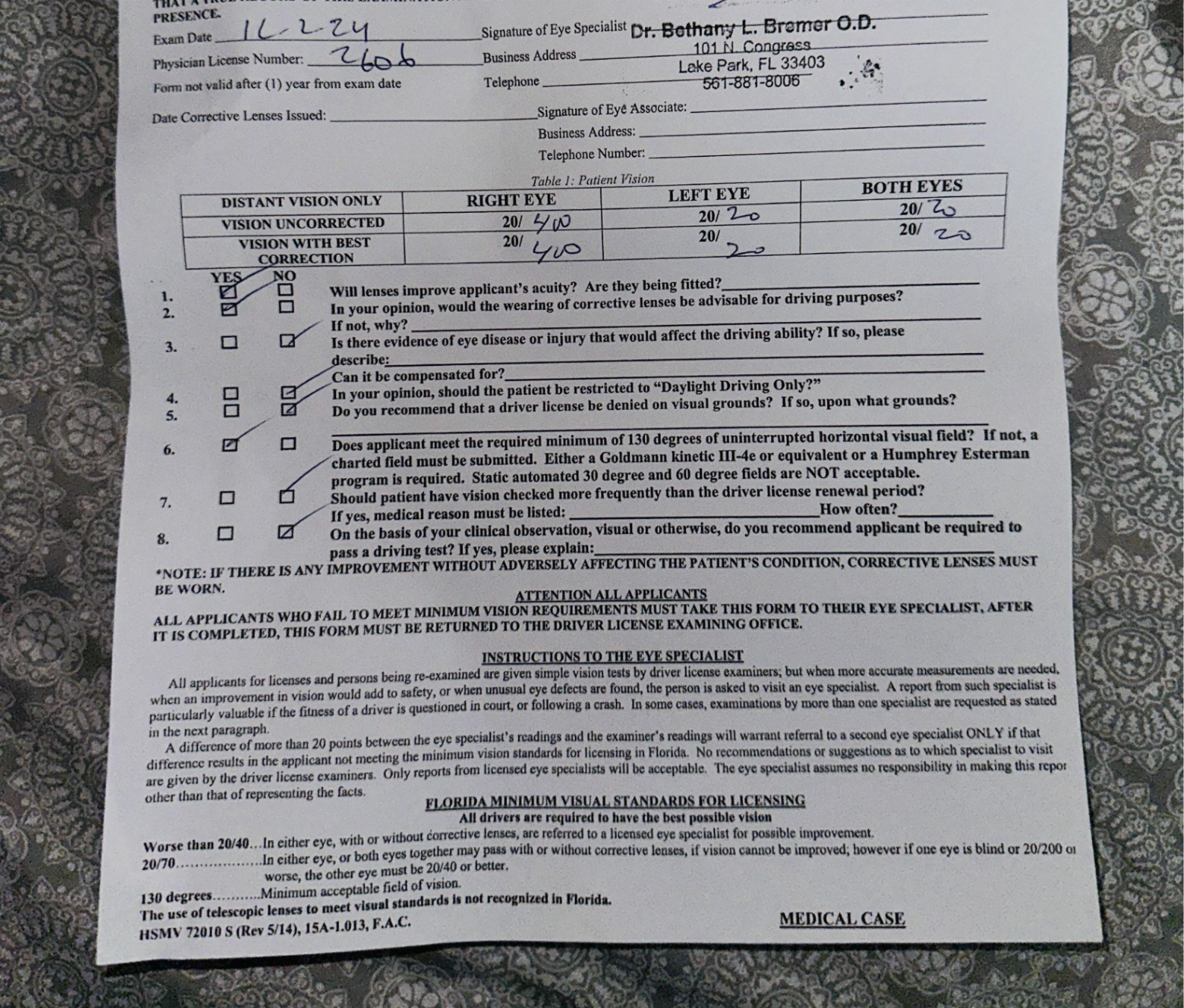

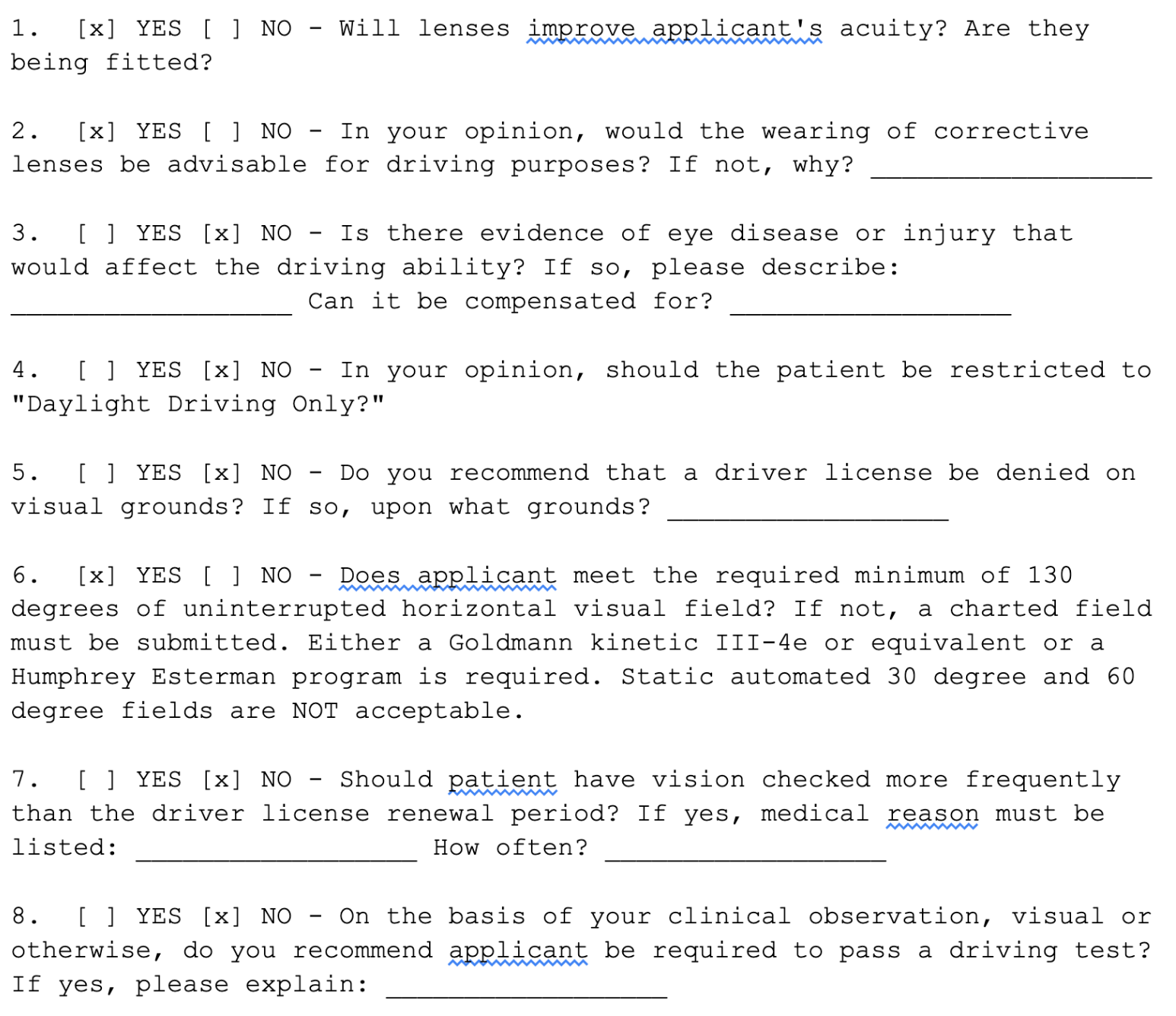

I found this photo of a vision exam form online. There’s a table and some basic yes/no checkboxes.

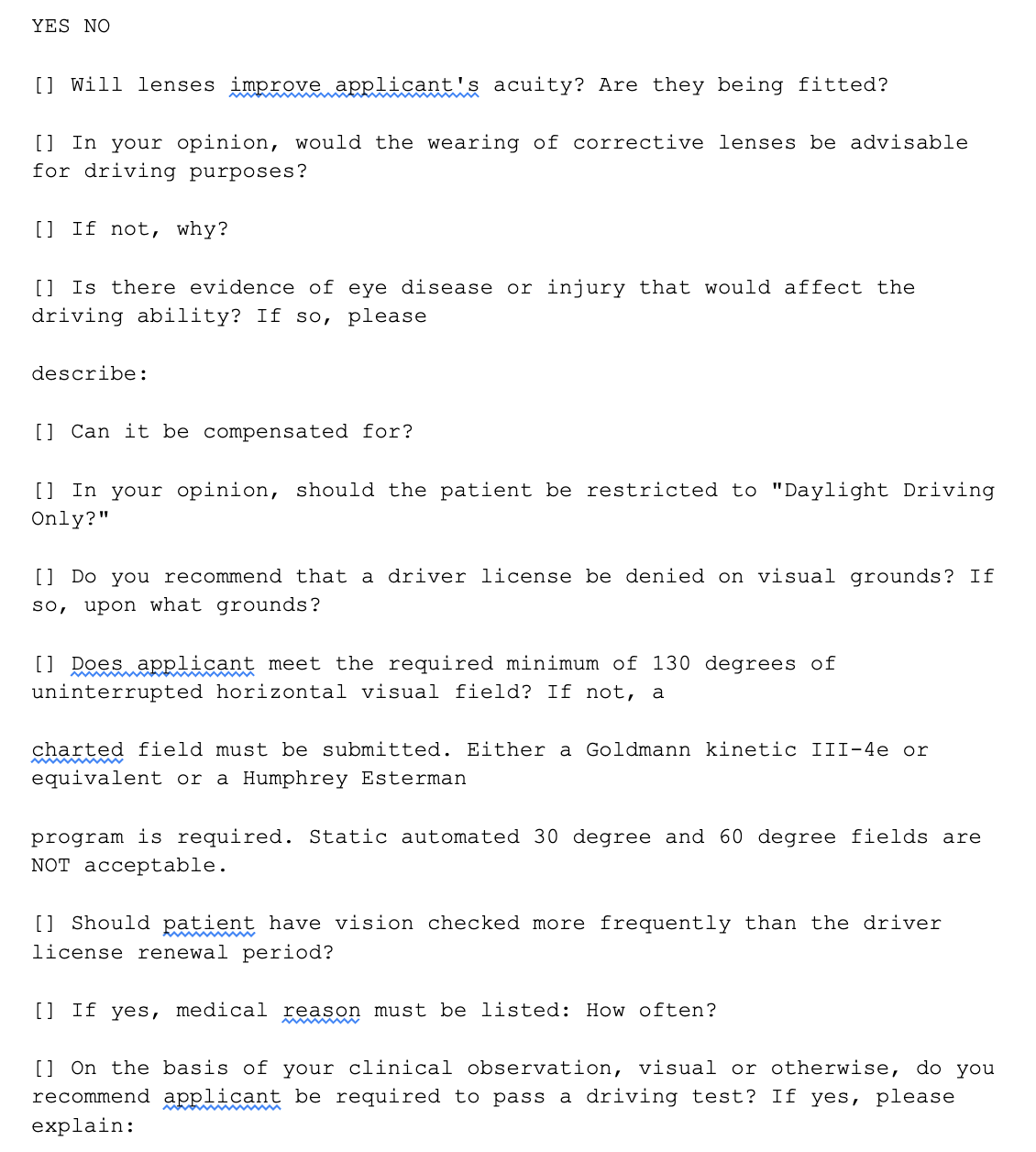

This is the type of thing that a generic OCR engine might struggle with. Here’s what happens when I upload this form to Gemini and simply ask it to give me the contents in markdown format - I’ll just include the checkbox section below to highlight where it breaks down.

If we were using AI as part of a workflow to extract or enter information from this form into another system, then this would be pretty useless. The source of the problem here is the OCR, which is likely some form of Google generic Document AI processor. Feeding this image through the Document AI interface directly will show us that it gets easily confused with detecting these types of forms, and when I ask Gemini to convert the form to markdown, it relies too heavily on the OCR text provided.

When you're working with documents where structured accuracy matters, there's a more reliable workflow: don't hand Gemini the PDF directly. Do the OCR yourself, then give the model both the image and the extracted text.

Here's the basic idea:

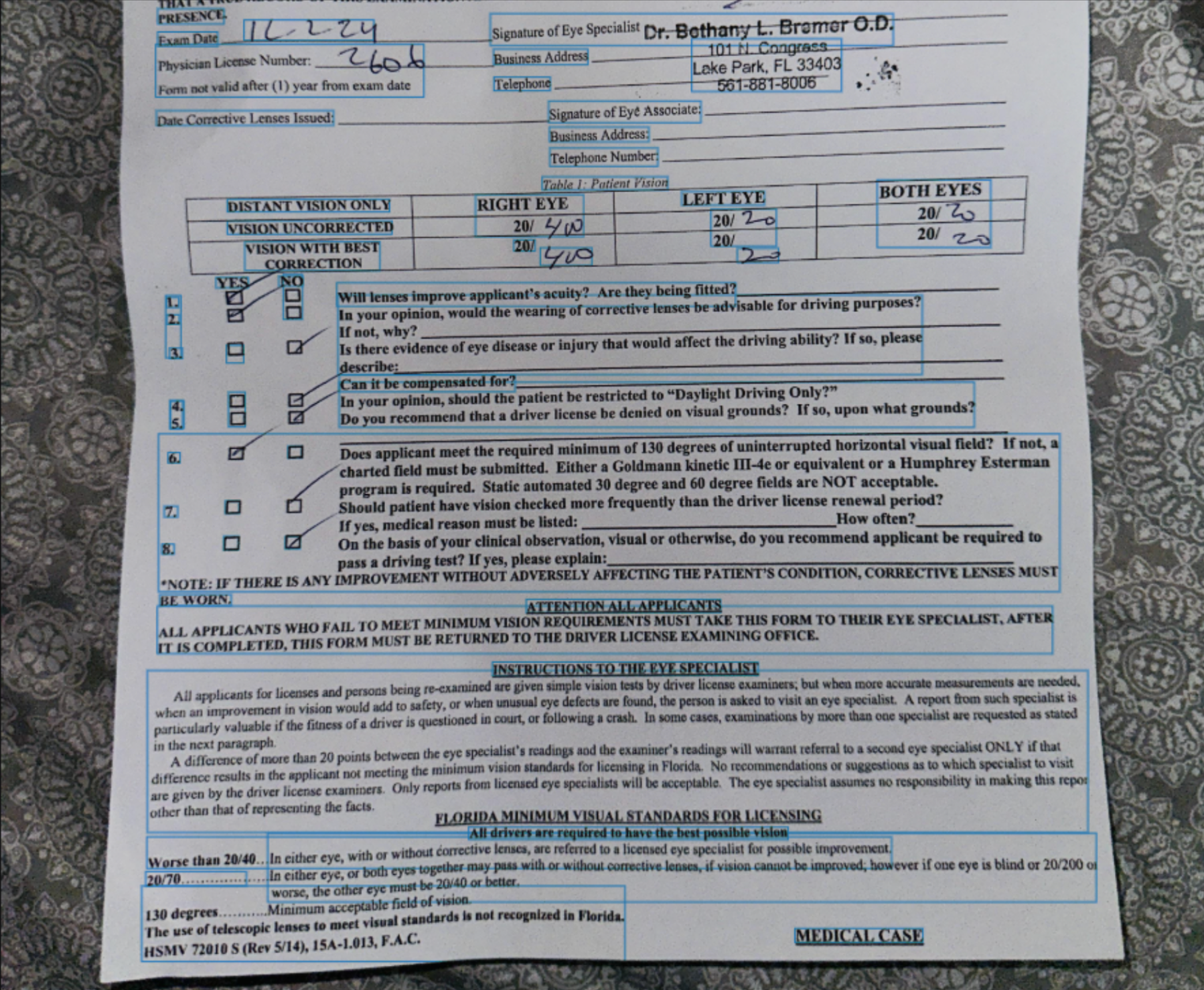

In this case, we’re going to use Mistral AI’s document OCR model, which performs better out of the box on structured documents like these. And we will leave the rest of the prompt identical to before, simply: “Provide a markdown format of this document.”

Here’s the result this time for that same section:

This is obviously a huge improvement, and this is data we can use downstream in the workflow to extract whatever information we need.

Not every PDF needs this treatment. For most use cases — summarizing a research paper, asking questions about a long report, extracting key points from a contract — Gemini's built-in processing is fast, convenient, and accurate enough. The friction of a custom OCR pipeline isn't always worth it.

But consider taking control when:

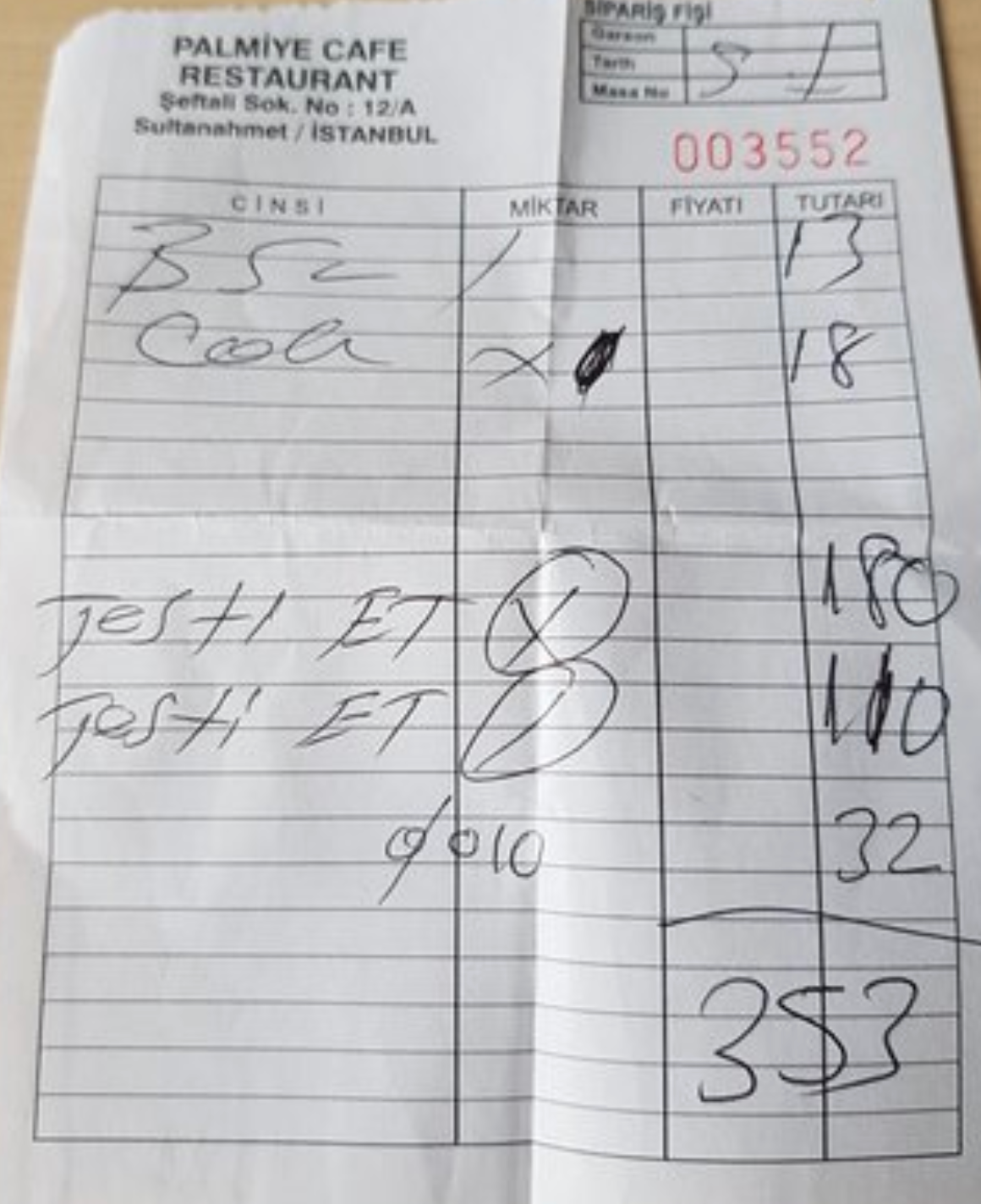

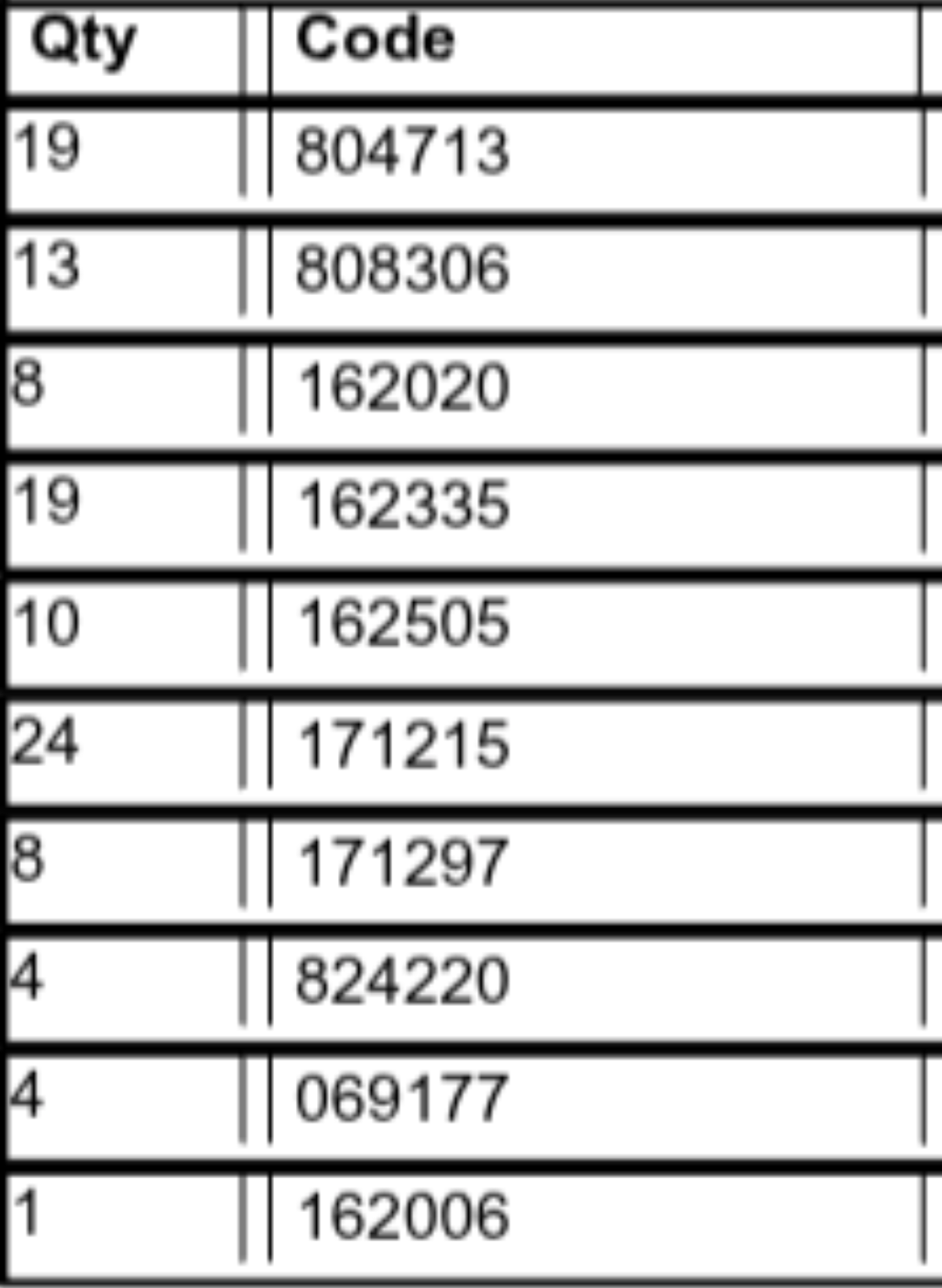

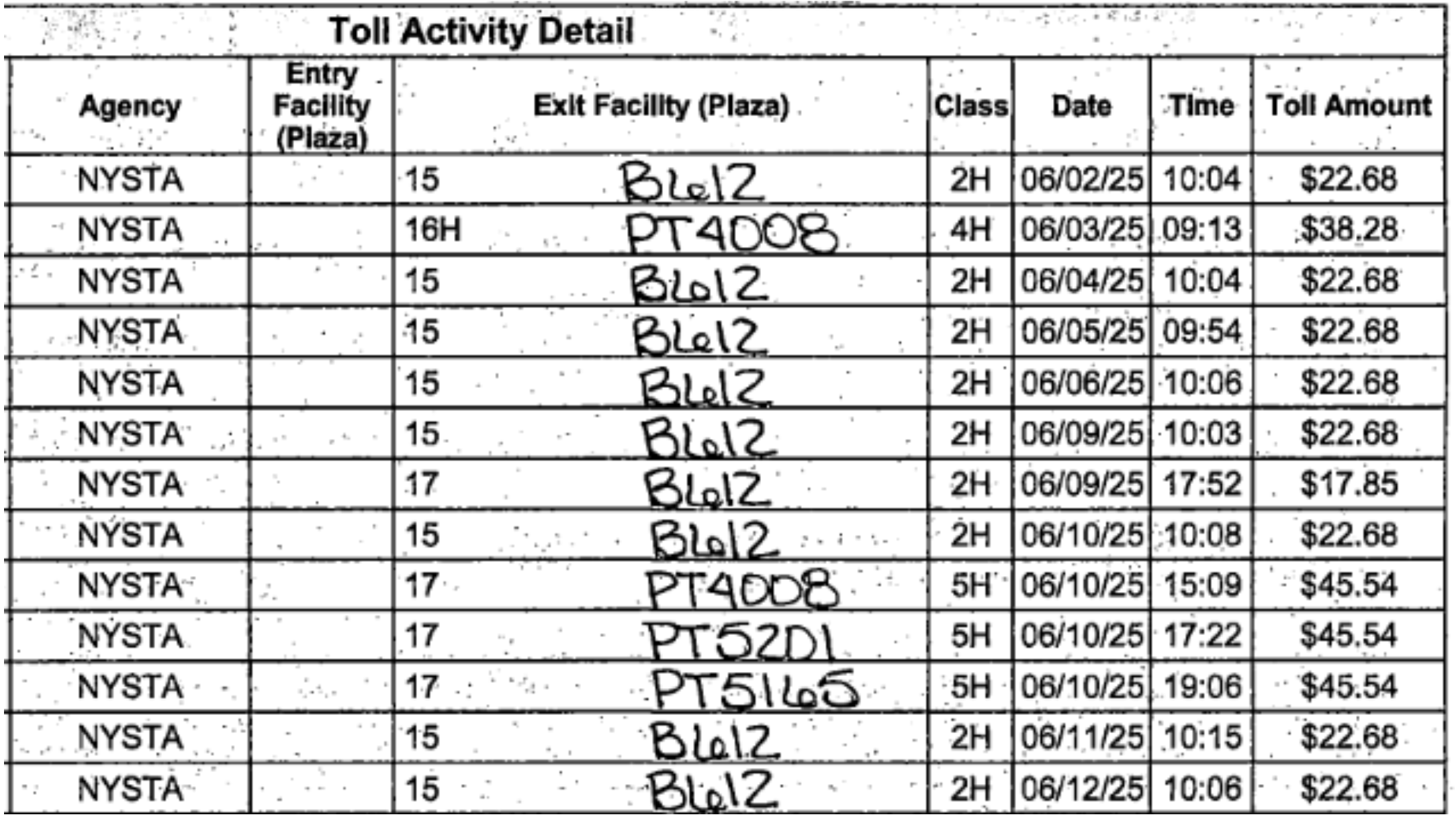

Here’s a few examples of cases where generic OCR failed and Gemini misread some of the text. In these cases, giving Gemini the images and the text from an appropriate external OCR engine once again solved these issues.

There's a pattern worth recognizing here that applies beyond just Gemini and PDFs. As AI tools get more capable, they also get more opaque. Features that used to require explicit steps — OCR, parsing, normalization — get folded into seamless, end-to-end experiences. That's usually a good thing, but it can also make it harder to identify where errors enter the pipeline.

When something goes wrong with a multi-step explicit process, you can inspect each step. When something goes wrong inside an end-to-end model, you often just get a subtly wrong answer with no breadcrumbs.

The takeaway isn't to distrust AI tools — it's to understand their architecture well enough to know which steps they're handling invisibly, and to ask yourself: is this a step I should be controlling?

For most PDFs, letting Gemini handle it is completely reasonable. For high-stakes structured documents, taking back that one step and doing your own OCR is a small investment that pays for itself the first time it catches an error you would have otherwise missed.

The hidden OCR in Gemini is a feature, not a flaw. But like any tool, it works best when you know its limits. A little transparency about what's happening under the hood goes a long way toward building AI workflows you can actually trust.

Q: Does Gemini perform OCR automatically when you upload a PDF?

A: Yes. When you upload a PDF to Gemini, the model automatically runs OCR in the background to convert the document's visual content into text before reasoning about it. This process is invisible to the user — there is no intermediate step you can inspect or correct.

Q: Why does AI OCR fail on forms and checkboxes?

A: Generic OCR engines are optimized for prose and continuous text, not for structured layouts where position and visual markers carry meaning. Checkboxes, radio buttons, table cells, and handwritten fields require form-aware OCR tools that understand document structure — not just character recognition.

Q: What types of documents need a custom OCR pipeline instead of relying on built-in AI processing?

A: Documents where accuracy is non-negotiable benefit most from a custom OCR step — including forms with checkboxes or handwritten fields, dense tables, invoices, medical records, compliance checklists, and any scanned or low-resolution document. For general prose like reports or contracts, built-in AI processing is typically sufficient.

Q: How do you improve PDF data extraction accuracy in an AI workflow?

A: The most reliable approach is to run a purpose-built OCR tool on your document first, then pass both the original image and the extracted text to the AI model together. The image provides visual context while the pre-processed text gives the model accurate, structured content to reason from — eliminating the hidden OCR step as a source of error.

Q: Does this OCR limitation apply to other AI models besides Gemini?

A: Yes. Most LLM providers that accept PDF uploads follow a similar pattern — converting document content to text via OCR before passing it to the model. The specific OCR engine and its accuracy on structured documents varies by provider, but the underlying architecture and its blind spots are broadly consistent across platforms.

Yes. When you upload a PDF to Gemini, the model automatically runs OCR in the background to convert the document's visual content into text before reasoning about it. This process is invisible to the user — there is no intermediate step you can inspect or correct.

Generic OCR engines are optimized for prose and continuous text, not for structured layouts where position and visual markers carry meaning. Checkboxes, radio buttons, table cells, and handwritten fields require form-aware OCR tools that understand document structure — not just character recognition.

Documents where accuracy is non-negotiable benefit most from a custom OCR step — including forms with checkboxes or handwritten fields, dense tables, invoices, medical records, compliance checklists, and any scanned or low-resolution document. For general prose like reports or contracts, built-in AI processing is typically sufficient.

The most reliable approach is to run a purpose-built OCR tool on your document first, then pass both the original image and the extracted text to the AI model together. The image provides visual context while the pre-processed text gives the model accurate, structured content to reason from — eliminating the hidden OCR step as a source of error.

Yes. Most LLM providers that accept PDF uploads follow a similar pattern — converting document content to text via OCR before passing it to the model. The specific OCR engine and its accuracy on structured documents varies by provider, but the underlying architecture and its blind spots are broadly consistent across platforms.