Why Building Your Own AI Agent Is Riskier Than You Think

Building your own AI agent for order processing is far riskier than buying a purpose-built one. Homegrown agents combine three capabilities that security researcher Simon Willison calls the “lethal trifecta”: access to sensitive customer data, exposure to untrusted inputs like emailed purchase orders, and the ability to act on business-critical systems. OWASP’s 2025 Top 10 for LLM Applications ranks prompt injection as the number-one critical vulnerability in AI systems. OpenAI has stated this attack vector is “unlikely to ever be fully solved.”

Purpose-built platforms like Y Meadows are designed differently. AI parses your purchase orders into a fixed schema and stops there. The workflow, not the AI, decides what happens next. The model has no tool access, the platform is tenant-isolated, ERP access is least-privilege and vetted by your IT team, and there’s an optional human review step on top.

Why Are Operations Leaders Tempted to Build Their Own?

The appeal is understandable. LLM APIs are easy to call, open-source frameworks are everywhere, and your dev team is itching to experiment. When you’re processing hundreds of purchase orders daily from emails, PDFs, and portals, the idea of wiring up an AI agent that reads, extracts, and posts orders directly into your ERP feels like a weekend project. A PwC survey of over 300 U.S. executives found that 79% of organizations are already using AI agents, and 88% plan to increase AI-related budgets in the next twelve months.

But as Wall Street Journal technology columnist Christopher Mims recently observed, the rush to “give AI total control” of critical systems “is going to look so foolish in retrospect.” The gap between a working prototype and a production-safe system is where most DIY agent projects quietly fail. That gap is where the real risk lives.

What Is the “Lethal Trifecta” and Why Does It Apply to Order Processing?

The lethal trifecta, a framework coined by Simon Willison in June 2025, identifies three capabilities that create a critical security vulnerability when combined in a single AI agent. Any one element is manageable. Two raises the risk. But all three together allow an attacker to trick the agent into accessing sensitive data and exfiltrating it externally.

A DIY AI agent built for order processing almost always hits all three: it reads customer data and pricing from your ERP, it ingests untrusted content from emailed purchase orders, and it writes or communicates back into business-critical systems. That’s the exact architecture attackers exploit through prompt injection.

Definition: The Lethal Trifecta (Simon Willison, June 2025)

1. Access to private data — customer records, pricing, order history, ERP credentials

2. Exposure to untrusted content — incoming emails, PDF attachments, web portal submissions that could contain hidden instructions

3. Ability to externally act — posting orders to ERP, sending confirmations, making API calls, or any mechanism that could alter data or transmit information outward

When all three combine in a DIY agent, an attacker can exploit it to corrupt orders, exfiltrate data, or manipulate your ERP.

What Goes Wrong When You Build AI Agents In-House?

The security problem is only the beginning. Internal AI agent projects face a cascade of challenges that are easy to underestimate during a proof-of-concept but become critical in production.

Prompt injection is where this gets ugly. LLMs treat all input as a single stream of tokens, whether it comes from your system prompt or from a customer’s emailed purchase order. A malicious or even accidentally malformed PO can push the agent into behavior you didn’t ask for. OWASP ranks this as the number-one LLM vulnerability, and Pillar Security research shows that 20% of jailbreak attempts succeed in under 42 seconds.

Beyond security, DIY agents suffer from reliability gaps. No two customers’ order formats look the same: handwritten notes on PDFs, Excel attachments with merged cells, email bodies with inconsistent formatting. A homegrown agent has to handle that long tail from scratch: schema extraction, entity resolution against your customer and product catalogs, validation rules. Without a parsing pipeline engineered and proven against thousands of real-world purchase orders, error rates climb quickly. Every error means a misshipped pallet, a credit memo your AR team has to chase, or a customer-service call your team didn’t need to have.

Then there’s the integration burden. Connecting an AI agent safely to ERP systems like SAP, Oracle, or Dynamics requires more than an API call. It requires scoped permissions, transaction validation, rollback handling, and audit trails. Most internal teams underestimate this work by months.

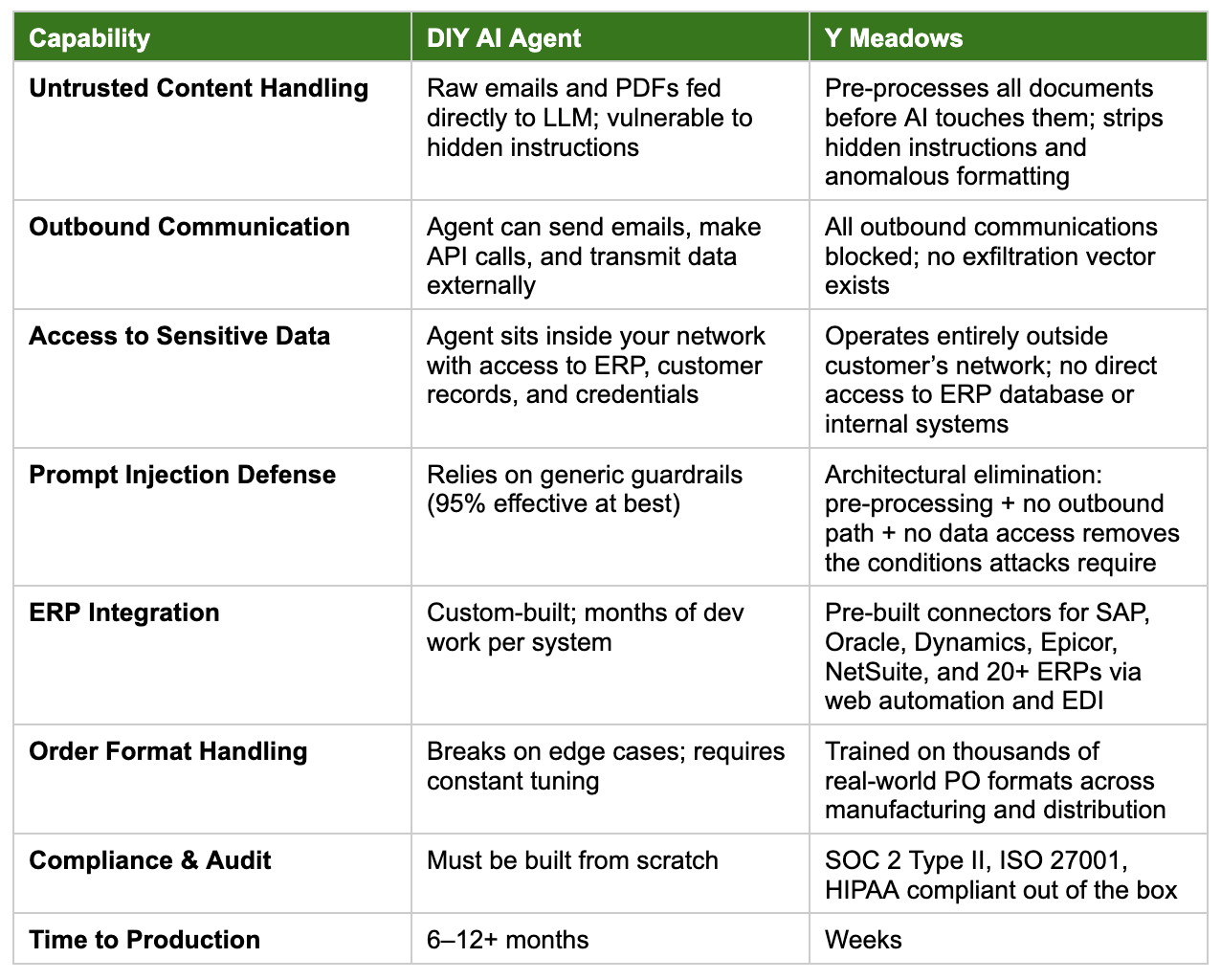

How Does DIY Compare to Purpose-Built Order Automation?

How Does Y Meadows Break Every Leg of the Lethal Trifecta?

Guardrails on top of a vulnerable architecture won’t get you there. The fix is to design a system where the trifecta never combines into a usable attack in the first place. Y Meadows uses AI inside a fixed, low-code workflow rather than as an autonomous agent. That structural choice, plus tenant isolation, least-privilege integration, and optional human review, defuses each leg of the trifecta.

Leg 1 - Untrusted content: Y Meadows treats every incoming document as untrusted, but the AI’s role is narrow. The pipeline first prepares the file, then runs a multi-stage parsing process that extracts content into a fixed order schema. The AI is a parser. It doesn’t choose what to do with what it reads. Even if a malicious purchase order tries to issue instructions, there is no agent listening for them. The next step in the workflow is whatever the workflow says it is.

Leg 2 - Ability to act externally: The AI has no tool access. An attacker who slips a prompt past the parser still has no lever to pull: the model cannot send emails, call APIs, or write to your ERP. Every action that touches an outside system runs in the deterministic workflow, not in the model. Decisions about credit holds, pricing exceptions, and customer-specific routing are encoded as procedural policies, the same ones your team uses today. The workflow executes them; an LLM never gets to interpret them. An optional human-in-the-loop review step lets your team approve parsed orders side-by-side with the original document before anything reaches your ERP.

Leg 3 - Access to private data: Y Meadows is a multi-tenant SaaS platform with strict tenant separation, kept distinct from your ERP and other internal systems. The platform delivers parsed orders into your ERP through a least-privilege integration (API, middleware, or SFTP/S3 file exchange) chosen and vetted by your IT department. Customer and product matching uses vector search and LLM-assisted fuzzy matching against your catalog. The AI operates on a scoped lookup, not on the rest of your enterprise.

As Willison himself puts it: “the LLM vendors are not going to save us.” The architecture has to do the work. Y Meadows doesn’t ask an LLM to be trustworthy with your business. We use AI for what LLMs are good at, reading messy documents and matching entities, and we rely on a deterministic workflow, vetted integrations, and optional human review for everything else. The platform carries SOC 2 Type II and ISO 27001, both reaudited annually, with regular security updates on top. The posture your IT team signs off on at go-live is the one you have a year later.

Should You Build or Buy AI Order Automation?

AI can transform order processing. That’s not in question. Among organizations adopting AI agents, 66% report measurable increases in productivity and 57% report cost savings, according to PwC. The question is whether your organization should absorb the security, reliability, and integration risks of building that capability from scratch.

For most mid-market manufacturers and distributors, the answer is clear. Building, securing, and maintaining a production-grade AI agent that safely handles the lethal trifecta far exceeds the cost of a purpose-built platform that has already solved these problems across hundreds of deployments.

Frequently Asked Questions

Q: What is the “lethal trifecta” for AI agents?

A: The lethal trifecta is a security framework coined by Simon Willison in June 2025. It names three capabilities that create a critical vulnerability when combined: access to private data, exposure to untrusted content, and the ability to act externally. A DIY AI agent for order processing typically has all three, which is what leaves it open to prompt injection attacks that corrupt orders or exfiltrate sensitive data.

Q: Can guardrails fully prevent prompt injection in a homegrown AI agent?

A: No. OpenAI stated in December 2025 that prompt injection is “unlikely to ever be fully solved.” Most guardrail solutions claim around 95% effectiveness, which still leaves a meaningful gap in a system processing hundreds of orders daily. Gartner predicts that 25% of enterprise breaches will trace to AI agent abuse by 2028.

Q: How long does it take to build a production-ready AI agent for order processing?

A: Internal builds typically require 6–12 months or more to reach production readiness, and that timeline often expands significantly once ERP integration, security hardening, and edge-case handling are factored in. Purpose-built platforms like Y Meadows can go live in weeks because the security architecture, ERP connectors, and order format handling are already proven.

Q: How does Y Meadows avoid the lethal trifecta risk?

A: Y Meadows is built so the conditions that make prompt injection dangerous don’t apply in the first place. Instead of an autonomous agent, AI is one step in a fixed, low-code workflow that handles document parsing and entity resolution and nothing else. The AI has no tool access. The workflow, not the model, decides what happens to each order. The platform is tenant-isolated and kept separate from your ERP, and ERP integration runs on least-privilege API, middleware, or SFTP/S3 access vetted by your IT team. Optional human review lets your team approve parsed orders side-by-side with the original document before anything is committed.

Q: Is the DIY approach ever the right choice?

A: For organizations with dedicated AI/ML engineering teams, extensive security expertise, and order volumes that justify multi-year development investment, building internally can work. For most mid-market manufacturers and distributors, however, the risk-to-reward ratio strongly favors a purpose-built solution that has already solved these challenges at scale.

See How Y Meadows Handles Order Automation Securely

Skip the build risk. Our team will map the best integration approach for your ERP, your order formats, and your workflow—with enterprise-grade security built in from day one. Speak with an Expert → use.ymeadows.com/talktoymeadows

Frequently Asked Questions

The lethal trifecta is a security framework coined by Simon Willison in June 2025 identifying three capabilities that create a critical vulnerability when combined: access to private data, exposure to untrusted content, and the ability to externally act. A DIY AI agent for order processing typically combines all three, making it vulnerable to prompt injection attacks that can corrupt orders or exfiltrate sensitive data.

No. OpenAI stated in December 2025 that prompt injection is “unlikely to ever be fully solved.” Most guardrail solutions claim around 95% effectiveness, which still leaves a meaningful gap in a system processing hundreds of orders daily. Gartner predicts that 25% of enterprise breaches will trace to AI agent abuse by 2028.

Internal builds typically require 6–12 months or more to reach production readiness, and that timeline often expands significantly once ERP integration, security hardening, and edge-case handling are factored in. Purpose-built platforms like Y Meadows can go live in weeks because the security architecture, ERP connectors, and order format handling are already proven.

Y Meadows is built so the conditions that make prompt injection dangerous don’t apply in the first place. Instead of an autonomous agent, AI is one step in a fixed, low-code workflow that handles document parsing and entity resolution and nothing else. The AI has no tool access. The workflow, not the model, decides what happens to each order. The platform is tenant-isolated and kept separate from your ERP, and ERP integration runs on least-privilege API, middleware, or SFTP/S3 access vetted by your IT team. Optional human review lets your team approve parsed orders side-by-side with the original document before anything is committed.

For organizations with dedicated AI/ML engineering teams, extensive security expertise, and order volumes that justify multi-year development investment, building internally can work. For most mid-market manufacturers and distributors, however, the risk-to-reward ratio strongly favors a purpose-built solution that has already solved these challenges at scale.